I have in mind a comedy skit where woke progressive students in a logic and critical thinking class are offended by the unit on fallacies because they see themselves in the materials.

The skit would be funny in some measure because the likelihood that the students would recognize themselves in the course materials is nil. Imagine, though, that they did. Progressive students would learn about the fallacy of misplaced concreteness and see the foundation of their worldview under assault. They’d take it personally since progressivism prepares them to take everything that way. “What do you mean it’s fallacious to say that all whites are racially privileged?” They would follow up with an ad hominem: “You’re racist!”

There are many other funny interactions possible in the skit. Confronted with the law of the excluded middle, progressive students would see the problem with the queer construct of intersex. The script might have them shriek “Transphobe!” and run and complain to the dean. The problem of circularity in what is presented as causal reasoning would provoke the outcry: “Racial disparities facially prove racism!” (Not that they would put it like that.) Etcetera.

For many years now, I have been interested not only in what people believe but also in how they form, justify, and defend their beliefs. What sparked my interest in this was an undergraduate logic and critical thinking class taught by Dr. Ron Bombardi, a professor of philosophical psychology (among other fascinating areas) at Middle Tennessee State University. This was way back in the 1980s.

I had always been fascinated by how those around me—and radio and television talking heads—routinely demonstrated fallacious reasoning, some clever enough to disguise it with sophistry. Dr. Bombardi’s class introduced me to language that allowed me to articulate what I was seeing. It also helped me identify other examples of fallacious reasoning.

I discovered during my undergraduate years that philosophy is not the only course of study that examines fallacious reasoning. In psychology, my major, the umbrella name for the study of this problem is judgment and decision-making. Intersecting fields under the umbrella examine how people evaluate evidence, make choices, and systematically depart from ideal logic.

One confronts in these studies research on cognitive bias. Cognitive bias explains the predictable ways human thinking goes off the rails—confirmation bias, motivated reasoning, and similar patterns that shape how people interpret information. I have penned several essays on this problem, showing how we can understand current-day politics in psychological terms. This is not ad hominem. Rather, it is understanding a problem with the hope that we might think more clearly.

I discovered in these studies that the problem of fallacious reasoning is not, for the most part, due to an intractable deficit in clear thinking. To be sure, human cognition is inherently subject to error because of the structure of the mind (there is, it should be noted, a range of variation error-proneness). However, there are ways to avoid these errors. This is why we study judgment and decision-making.

Cognitive error is not the only problem. Much of the work in human cognition overlaps with social psychology (I had training in this area at the master’s and PhD levels), especially in areas concerned with group influence, identity, and self-justification.

This is where ideas like cognitive dissonance become pertinent. Cognitive dissonance explains why people feel discomfort when confronted with conflicting beliefs and typically resolve it by rationalizing rather than revising their views. I have written many essays on this, as well.

Returning to Bombardi’s domain, the parallel field in philosophy is epistemology, which studies the nature of belief conceptually rather than experimentally. I devoted a great deal of time to epistemology in my PhD program. Unfortunately, graduate students didn’t encounter much discussion of this branch of philosophy in class (and the metaphysics of social structure was fractured by the plethora of sociological theory). I took it upon myself to interrogate epistemic matters.

The grounding of such studies in my advanced degrees is found in the sociology of knowledge (Karl Mannheim, Alfred Schutz, Peter Berger, and Thomas Luckmann are standouts). Here, one examines the social construction of belief. After studying these ideas, all the pieces came together, and I was now qualified to assess not only arguments themselves, but also why people believed what they believed.

A key framework that bridges psychology, philosophy, and sociology is dual-process theory, which distinguishes between fast, intuitive thinking and slower, more analytical reasoning. The theory holds that both modes of thought are exhibited in the practical lives of the species. It explains how people handle routine tasks automatically while reserving mental energy for complex problems. At the same time, there is variation among individuals, indicating that some individuals are more deliberate thinkers.

In dual-process theory and human variation in cognitive style, I had an explanation for why “debates” with those around me could be so damn frustrating (I put debate in scare quotes, because what passes for debate is often not debate).

Fast, intuitive thinking is very often wrong, but audiences are impressed by it. Those who depend on this cognitive style are difficult to argue with, not because they are right, but because they can’t be wrong. Confidence and quickness of judgment are popularly seen as marks of superior intelligence.

Those who carefully craft arguments are boring, their presentation style tedious. This is especially true when one puts one’s arguments in writing and avoids debate. Who has time to read what a man with whom one disagrees thinks about things? I get it. Why is this or that writer so important that his ideas require consideration?

As a man who writes more than he debates, I have to take this in stride. I routinely encounter people who take issue with something I said because “what about this other thing?” I do talk about the other thing, and they would know that if they read my work. Once more, I get it: my work is too unimportant to invest any time in it. After all, I am already wrong.

The problem of judgment and decision-making drew me to teaching. After all, my good fortune of having professors like Dr. Bombardi improved my ability to reason. Why not impart what I have learned to younger generations? The benefits of education accrue not only to students. I, too, benefit from classroom preparation. The responsibility of teaching clear thinking requires the isolation of one’s own errors of thinking.

In his “Theses on Feuerbach” (1845), Karl Marx writes, “The educator must himself be educated.” This line has stuck with me throughout my teaching. In context, Marx is criticizing the notion that people can be changed solely by external instruction or by enlightened elites. His fuller point is that circumstances are changed by people. If circumstances are to be changed in a rational direction, then those responsible for shaping impressionable minds must themselves develop their ability to reason. At the same time, Marx’s material conception of history holds that those who endeavor to shape society are themselves shaped by society. Teachers aren’t all-knowing. Thus, the good teacher is also a good student. With Marx’s insight in mind, I see myself as an eternal student.

I have been teaching a course on the foundations of social research almost every semester, and each iteration includes more material on judgment and decision-making. Every year of preparation reinforces the imperative of logical argumentation. I seek out the clear thinkers and model my own thinking after them. I find flaws and correct them. I don’t want to be wrong out of ego, but because being right is a virtue.

Efficacy in teaching students how to think requires learning to avoid shrinking from controversial examples. My objective is to motivate engagement in self-examination, so I show students how I came to change my mind about matters that ideological commitments would have me leave alone.

In all this, I have a political goal in mind, and I am explicit about that. Understanding the problem of intuition and tightening one’s thinking facilitates Habermas’ ideal speech situation and thus advances the practice of civic dialogue. A properly functioning democracy depends on rational discourse. Since our actions affect the world around us, reason should guide our actions. We need to be more deliberate in our thinking.

Altogether, these areas and the attitude of critique—the dialectic—form a cohesive picture with a valuable lesson: humans are not just imperfect reasoners, but highly skilled at constructing justifications for what they already want to believe—a tension that would make my skit idea inherently funny, if the audience were well-versed in the language of judgment and decision-making.

That’s a big if. While the premise that those learning about fallacious reasoning would see themselves in the materials is potentially hilarious to my mind, the skit will likely not fly. Indeed, it might present yet another opportunity for an audience to take offense; as it is, when I criticize how people think, they think I am insulting them. I explore this problem in the next section of this essay.

* * *

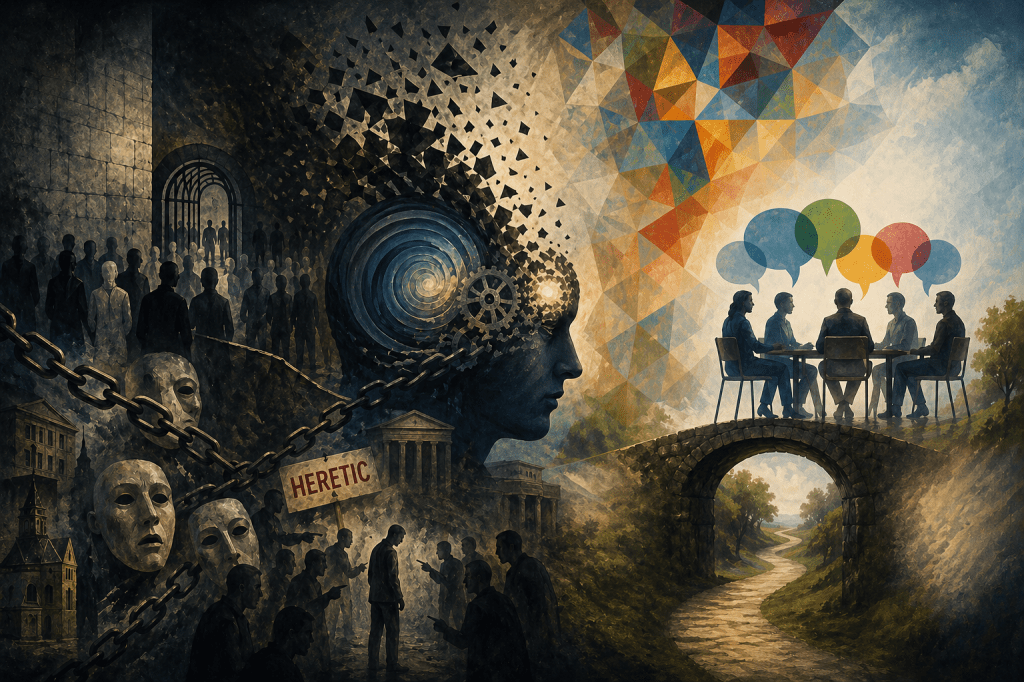

I want to connect the foregoing to my personal experience across areas of social life. I grew up in the buckle of the Bible Belt in Middle Tennessee. In this context, my views on religions were heretical. However, my experience growing up in a Christian culture is highly similar to my experience in academic institutions.

Across very different social environments, a strikingly similar pattern emerges: a perceived moral consensus that is treated as both obvious and binding, coupled with explicit or implicit sanctions against dissent. The content of the consensus differs—religious orthodoxy in one case, political orthodoxy in the other—but the structure of the social dynamic remains remarkably consistent.

This section analyzes that common structure through the three lenses I identified in the first section: philosophy (especially logic and epistemology), psychology (with emphasis on cognitive biases and social cognition), and sociology of knowledge (particularly social constructionism and group boundary maintenance).

At the philosophical level, the experience can be understood as a breakdown of what might be called epistemic pluralism—the recognition that reasonable disagreement is possible among informed and rational agents. Yet, in both environments described, certain propositions, such as expressing support for a particular political figure, are judged morally unacceptable, function not as contestable claims but as hinge commitments—background assumptions that structure discourse but are themselves shielded from scrutiny.

From the standpoint of logic and critical thinking, this creates a situation in which disagreement is preemptively pathologized. Rather than engaging opposing views as arguments to be evaluated, the community treats them as evidence of moral or intellectual deficiency.

Such situations reflect a shift from argumentative rationality (evaluating reasons) to identity-protective reasoning (protecting group norms and status). There is something wrong with those who disagree because the expectation is that the moral and reasonable person agrees.

A double standard emerges. Those who are immoral and unreasonable are meant to be ridiculed, but when the moral and reasonable are ridiculed, offense is taken. I am not opposed to ridicule, which I have explained on this platform. Some ideas are absurd. But it is not necessarily because the ridiculous person is immoral or unreasonable. It is rather because the ideas he expresses are ridiculous—and because his cognitive style precludes him from abandoning or modifying those ideas.

Philosophers of deliberation often emphasize the importance of the principle of charity—interpreting opposing views in their strongest plausible form. In the environments I am describing, this principle is largely absent, if not practically excluded. Instead, dissenting positions are reduced to caricatures, making genuine dialogue practically difficult if not impossible.

Psychologically, these dynamics are well-explained by several well-documented cognitive and social biases: pluralistic ignorance, spiral of silence, in-group/out-group bias, and moralization of belief. I chose these because they suggest opportunities for improving dialogue, and thus are hopeful for advancing democratic deliberation.

Pluralistic ignorance is present when individuals privately dissent from the perceived consensus but assume (incorrectly) that they are alone, leading to self-censorship. This is a powerful force. It is why finding ways to promote mutual knowledge is so important for opening discourse. As dissent becomes less visible, the perceived consensus appears stronger, further discouraging deviation. I have written essays on this using Hans Christian Andersen’s fable of the naked emperor. Pluralistic ignorance is the source of the spiral of silence, wherein the confident manufacture the false perception that their ideas are valid.

In-group/out-group bias reinforces the spiral of silence. Here, group members are evaluated more charitably, while outsiders—or perceived deviants within the group—are judged more harshly. This problem is reinforced by the moralization of belief, in which political or religious positions become tied to moral identity. Disagreement is experienced not as intellectual divergence but as ethical violation. Growing up, I fell silent in a room of Christians because I knew I was an outsider; yet, these were my family and friends, and I wanted to belong.

As an atheist growing up in Middle Tennessee, I routinely found myself in rooms full of Christians who assumed that everyone present believed in God and that God was good all the time—and that those who didn’t agree were bad people. I kept my mouth shut because of what might happen if I didn’t.

I thought things would be different in academia. However, when I came to the university, I found the same dynamic. At faculty gatherings, my colleagues—almost invariably progressive in the humanities and social sciences—not only ranted about George W. Bush and Dick Cheney (whom progressives have since rehabilitated) and, more recently (and more intensely), about Donald Trump, but ridiculed those who expressed support for them. I did not vote for Bush. But I did vote for Trump. Yet I dare not say so; there are consequences for defending him.

I took my formative years in stride, since religious faith is not by definition a rational exercise. There, I was worried about social alienation and even physical retaliation. The assumption was that everybody was a Christian. But the same is true in academia. My colleagues at the university assume I belong to the progressive tribe.

It troubled me to discover this. The academic spirit is explicitly rooted in rational thought, I thought. This is the place where diversity of opinion is prized. Yet, on college campuses, support for Trump amounts to a heresy. I am expected to know better. One might think that the fact that a college professor (with advanced degrees, peer-reviewed publications, and so on) supports Trump would prompt some reflection on their assumptions about him and his supporters. If an educated person agrees with Trump’s policies, maybe some time should be devoted to understanding why. Instead, I find that the instinct runs in the opposite direction, extending even to a desire to excommunicate me. If it were not for academic freedom, I surely would have been disciplined or expelled.

My silence for many years is known as preference falsification, where individuals conceal their true beliefs to avoid professional or social consequences. Preference falsification is particularly salient in environments where reputational costs are high, such as academia. In past essays, I have described this as a species of bad faith, where hedging and silence are necessary for survival, since even the principle of academic freedom does not insulate the heretic from negative consequences for his heresy. Here, social alienation is the problem.

Crucially, these mechanisms operate largely below the level of conscious intention. Participants in such environments often perceive themselves as open-minded and rational, even as their behavior reflects strong conformity pressures.

During a period of self-examination, I recognized that preference falsification was an emotional burden. Concealing or obfuscating my opinions felt dishonest. Moreover, it compromises integrity. Certainly, it did not advance discourses necessary for advancing democratic deliberation.

It took me a long time to finally speak publicly about my support for Trump. But I still avoid talking about it around my colleagues. Freedom and Reason grew out of my frustration with this situation. I hoped that, in reading my essays, I would ease those around me into an awareness of my politics. Because my writings often come with explanations for how I landed where I did, I hoped they would, assuming charity, help others understand why I think this way. Maybe then, in my presence, others would hesitate to ridicule support for Trump, not for my sake, but for the sake of the millions of Americans who stand with the President. Things did not go as I had hoped, as I document in various essays.

From the perspective of the sociology of knowledge, both of the settings I have described can be understood as systems engaged in boundary maintenance—the process by which groups define and enforce the limits of acceptable belief.

In this framework, knowledge is not merely discovered but socially constructed and stabilized through institutions, norms, and power relations. What counts as “reasonable,” “informed,” or “moral” is shaped by these social processes. As a sociologist, I understand this. But it doesn’t make practical life any easier. That would require others to understand this, as well, and the reluctance to be charitable and tolerant seems an intractable problem.

My experiences illustrate a key feature of such systems: the existence of taken-for-granted assumptions that function as markers of group membership. In religious contexts, these are doctrinal and theological; in academic contexts, they are ideological and political. In both cases, dissent is not just disagreement but deviance—a violation of group identity.

The language of “heresy” and “excommunication,” while metaphorical in secular contexts, is sociologically apt. At the level of groups, internal dissent is more objectionable than external opposition; internal dissent threatens the coherence of the group’s identity. This explains the asymmetry I am describing: as someone presumed to belong to the group, my dissent carries greater social risk than it would if I were clearly identified as an outsider. The expectation that I “should know better” reflects the enforcement of internal norms rather than the evaluation of arguments. Hence, the intractability of the problem.

One of the most striking aspects of my experience is the structural similarity between two ostensibly opposed environments. Despite differences in content (religious vs. secular, liberal vs. progressive), both exhibit implicit sanctions against dissent, limited tolerance for viewpoint diversity, and the moralization of disagreement. However, while I don’t have to belong to a religious community, my livelihood depends on belonging in higher education. Life in an institution where progressivism is hegemonic, under the pressure of its illiberal spirit, makes dissent risky.

All of these intersect to incentivize self-censorship. This phenomenon is not tied to any particular ideology but is instead a general feature of human social organization. Groups, regardless of their stated commitments to openness or truth-seeking, tend to develop mechanisms that stabilize shared beliefs and discourage deviation. This is why it is so important to talk about how people come to believe the things they do and why they are so resistant to opinions that challenge those beliefs. They take it personally because it goes to identity.

But this is even more reason to focus on these problems, and why my writing has moved so strongly in this direction. These dynamics have important implications for how individuals form and express judgments. When dissent is suppressed, groups lose access to information that could challenge or refine their beliefs.

This is the problem of epistemic distortion. Apparent consensus creates an illusion of certainty, reducing discernment and critical scrutiny. This, in turn, leads to overconfidence in one’s opinions.

The moral polarization that results from this state of affairs is a significant impediment to the interrogation of ideas. Treating disagreement as a moral failure intensifies division and reduces the possibility of dialogue. The result—self-censorship—diminishes freedom essential for democratic deliberation. Individuals refrain from expressing well-considered views, leading to a less robust intellectual environment. Without reasoned dialogue, no consensus is possible, and ideological and political polarization is entrenched.

From a decision-making perspective, this environment is suboptimal to say the least. It undermines the diversity of perspectives that is often necessary for sound judgment, particularly in complex or uncertain domains. But it’s worse than suboptimal. This sounds dramatic, I know, but it is true: the situation becomes an authoritarian one.

In a free and open society, persuasion is the central means for arriving at consensus positions that allow a people to move forward collectively with the common interests of a diverse population in mind. Robbed of or eschewing the power of persuasion, those who wish to impose their opinions on others often resort to forms of coercion, some subtle, others not so much.

Even when rhetoric appeals to common ground, it disguises the one-way street. Such rhetoric constitutes bad faith wrapped in weasel words. Once one sees it, the disingenuousness of it all becomes apparent. I’d be lying if I said I like being talked to that way—and by that way I mean condescension. Don’t pat me on the head. Make the argument—and try to make it using valid rules of reasoning. If not, then I am taking Schopenhauer Street.

* * *

The imagined scenario that began this essay is not merely comedic. It captures a deeper problem that has occupied my thinking for decades: not just what people believe, but how they come to believe it, how they justify it, and why they so often resist scrutiny. Drawing on insights from philosophy, psychology, and the sociology of knowledge, this essay explores both the structure of fallacious reasoning and the social conditions that sustain it. What begins as an abstract inquiry into logic and cognition ultimately converges with lived experience across very different domains of social life, revealing a common pattern—one in which dissent is pathologized, reasoning gives way to identity protection, and the possibility of genuine dialogue is quietly foreclosed.

As shown in the first section of this essay, the experiences described in the second section are not anomalies but instances of a broader pattern: the tendency of human groups to conflate shared belief with moral virtue and dissent with deviance and to avoid recognizing these problems by resort to fallacious reasoning. As a result, sophistry becomes the standard mode of discourse among intelligent people. By analyzing these dynamics through the lenses of philosophy, psychology, and sociology, we can better understand both their persistence and their costs.

Doing so, if possible, would prepare the population to engage in discourse using the rules of valid reasoning. Without such norms, even communities committed in principle to truth and inquiry risk reproducing the very forms of conformity they might otherwise criticize. I dwelled on my own frustrations because, in light of them, I am not sure it is possible. That progressives express favorable opinions about violence as a tool to suppress those with whom they disagree suggests that achieving Habermas’ ideal speech situation is impossible.

I will close on this. In prison, the Italian communist Antonio Gramsci, under the watchful eye of the censor, put the following sentiment in notebooks he was allowed to keep: “Pessimism of the intellect, optimism of the will.” Why was the man imprisoned? He was arrested and confined in 1926 by Mussolini’s fascist regime. That’s one way to stop ideas from spreading (if only temporarily). With the sentiment, Gramsci meant that one should face reality with clear-eyed, unsparing analysis—recognizing the problem of constraints without illusion—while still maintaining the determination and moral resolve to act and improve things. It’s a tension between sober judgment and committed effort. The takeaway for me is that the impossibility of rational discourse is not a good reason to abandon reason.